Chapter 6.2.2 Multiple Linear Regression

In Chapter 6 we introduced ideas related to modeling, in particular that the fundamental premise of modeling is to make explicit the relationship between an outcome variable and an explanatory/predictor variable . Recall further the synonyms that we used to also denote as the dependent variable and as an independent variable or covariate.

There are many modeling approaches one could take, among the most well-known being linear regression, which was the focus of the last chapter. Whereas in the last chapter we focused solely on regression scenarios where there is only one explanatory/predictor variable, in this chapter, we now focus on modeling scenarios where there is more than one. This case of regression with more than one explanatory variable is known as multiple regression. You can imagine when trying to model a particular outcome variable, like teaching evaluation score as in Section 6.1 or life expectancy as in Section 6.2, it would be very useful to incorporate more than one explanatory variable.

Since our regression models will now consider more than one explanatory/predictor variable, the interpretation of the associated effect of any one explanatory/predictor variables must be made in conjunction with the others. For example, say we are modeling individuals’ incomes as a function of their number of years of education and their parents’ wealth.

When interpreting the effect of education on income, one has to consider the effect of their parents’ wealth at the same time, as these two variables are almost certainly related. Make note of this throughout this chapter and as you work on interpreting the results of multiple regression models into the future.

Needed packages

Let’s load all the packages needed for this chapter (this assumes you’ve already installed them). Read Section 2.3 for information on how to install and load R packages.

library(ggplot2)

library(dplyr)

library(moderndive)

library(ISLR)

library(skimr)

What’s to come?

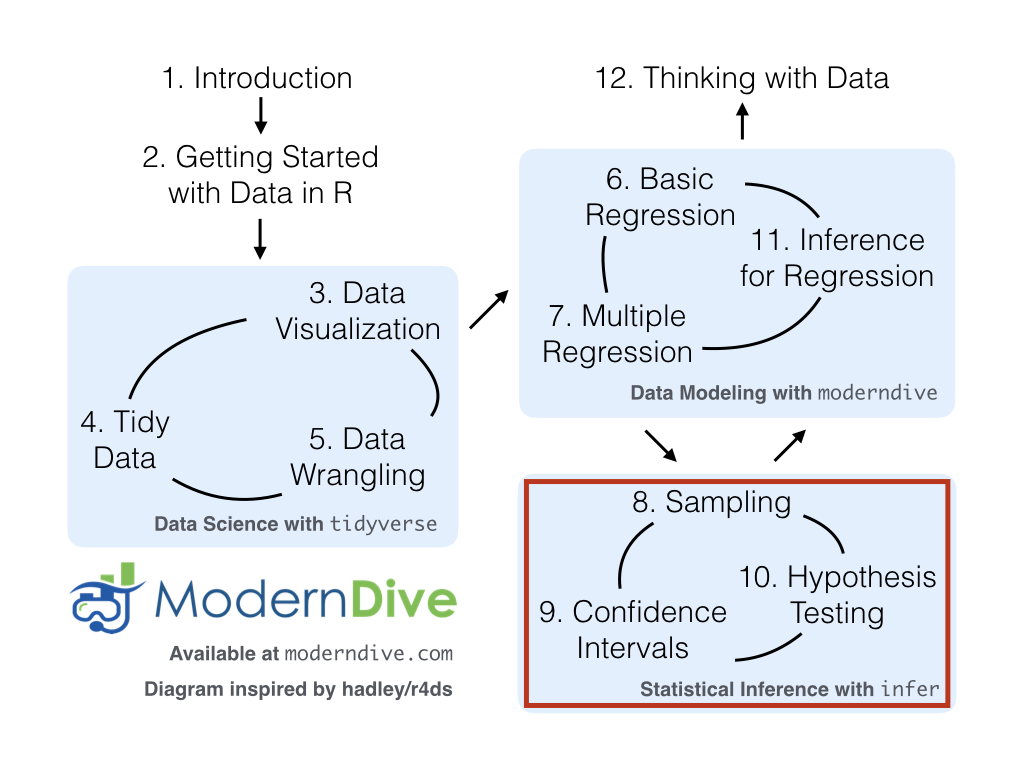

Congratulations! We’re ready to proceed to the third portion of this book: “statistical inference” using a new package called infer. Once we’ve covered Chapters 8 on sampling, 9 on confidence intervals, and 10 on hypothesis testing, we’ll come back to the models we’ve seen in “data modeling” in Chapter 11 on inference for regression. As we said at the end of Chapter 6, we’ll see why we’ve been conducting the residual analyses from Subsections 7.1.4 and 7.2.5. We are actually verifying some very important assumptions that must be met for the std_error (standard error), p_value, conf_low and conf_high (the end-points of the confidence intervals) columns in our regression tables to have valid interpretation.

Up next:

One numerical & one categorical explanatory variable

Let’s revisit the instructor evaluation data introduced in Section 6.1, where we studied the relationship between instructor evaluation scores and their beauty scores. This analysis suggested that there is a positive relationship between bty_avg and score, in other words as instructors had higher beauty scores, they also tended to have higher teaching evaluation scores. Now let’s say instead of bty_avg we are interested in the numerical explanatory variable age and furthermore we want to use a second explanatory variable , the (binary) categorical variable gender.

Note: This study only focused on the gender binary of "male" or "female" when the data was collected and analyzed years ago. It has been tradition to use gender as an “easy” binary variable in the past in statistical analyses. We have chosen to include it here because of the interesting results of the study, but we also understand that a segment of the population is not included in this dichotomous assignment of gender and we advocate for more inclusion in future studies to show representation of groups that do not identify with the gender binary. We now resume our analyses using this evals data and hope that others find these results interesting and worth further exploration.

Our modeling scenario now becomes

- A numerical outcome variable . As before, instructor evaluation score.

- Two explanatory variables:

- A numerical explanatory variable : in this case, their age.

- A categorical explanatory variable : in this case, their binary gender.

Multiple regression: Interaction model

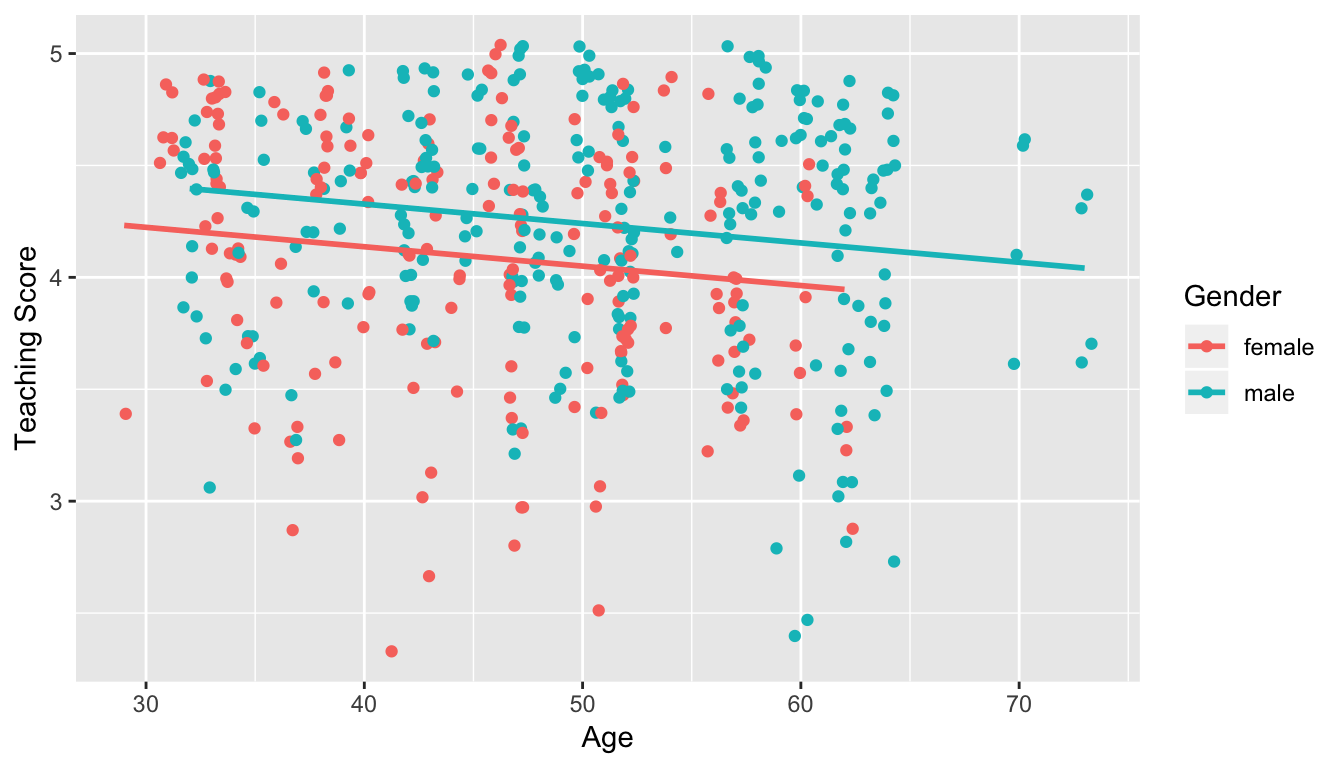

We say a model has an interaction effect if the associated effect of one variable depends on the value of another variable. These types of models usually prove to be tricky to view on first glance because of their complexity. In this case, the effect of age will depend on the value of gender. Put differently, the effect of age on teaching scores will differ for men and for women, as was suggested by the different slopes for men and women in our visual exploratory data analysis in Figure 7.4.

Let’s fit a regression with an interaction term. Instead of using the + sign in the enumeration of explanatory variables, we use the * sign. Let’s fit this regression and save it in score_model_3, then we get the regression table using the get_regression_table() function as before.

score_model_interaction <- lm(score ~ age * gender, data = evals_ch7)

get_regression_table(score_model_interaction)| term | estimate | std_error | statistic | p_value | lower_ci | upper_ci |

|---|---|---|---|---|---|---|

| intercept | 4.883 | 0.205 | 23.80 | 0.000 | 4.480 | 5.286 |

| age | -0.018 | 0.004 | -3.92 | 0.000 | -0.026 | -0.009 |

| gendermale | -0.446 | 0.265 | -1.68 | 0.094 | -0.968 | 0.076 |

| age:gendermale | 0.014 | 0.006 | 2.45 | 0.015 | 0.003 | 0.024 |

The modeling equation for this scenario is:

ˆy = b0+b1⋅x1+b2⋅x2+b3⋅x1⋅x2

ˆscore = b0+bage⋅age+bmale⋅1is male(x)+bage,male⋅age⋅1is male(x)

Oof, that’s a lot of rows in the regression table output and a lot of terms in the model equation. The fourth term being added on the right hand side of the equation corresponds to the interaction term.

Let’s simplify things by considering men and women separately. First, recall that 1is male(x) equals 1 if a particular observation (or row in evals_ch7) corresponds to a male instructor. In this case, using the values from the regression table the fitted value of is:

ˆscore=b0+bage⋅age+bmale⋅1is male(x)+bage,male⋅age⋅1is male(x)

=b0+bage⋅age+bmale⋅1+bage,male⋅age⋅1=(b0+bmale)+(bage+bage,male)⋅age

=(4.883+−0.446)+(−0.018+0.014)⋅age

=4.437−0.004⋅age

Second, recall that equals 0 if a particular observation corresponds to a female instructor. Again, using the values from the regression table the fitted value of is:

ˆscore=b0+bage⋅age+bmale⋅1is male(x)+bage,male⋅age⋅1is male(x)

=b0+bage⋅age+bmale⋅0+bage,maleage⋅0

=b0+bage⋅age

=4.883−0.018⋅age

Let’s summarize these values in a table:

| Gender | Intercept | Slope for age |

|---|---|---|

| Male instructors | 4.44 | -0.004 |

| Female instructors | 4.88 | -0.018 |

We see that while male instructors have a lower intercept, as they age, they have a less steep associated average decrease in teaching scores: 0.004 teaching score units per year as opposed to -0.018 for women. This is consistent with the different slopes and intercepts of the red and blue regression lines fit in Figure 7.4. Recall our definition of a model having an interaction effect: when the associated effect of one variable, in this case age, depends on the value of another variable, in this case gender.

But how do we know when it’s appropriate to include an interaction effect? For example, which is the more appropriate model? The regular multiple regression model without an interaction term we saw in Section 7.2.2 or the multiple regression model with the interaction term we just saw? We’ll revisit this question in Chapter 11 on “inference for regression.”

Multiple regression: Parallel slopes model

Much like we started to consider multiple explanatory variables using the + sign in Subsection 7.1.2, let’s fit a regression model and get the regression table. This time we provide the name of score_model_2 to our regression model fit, in so as to not overwrite the model score_model from Section 6.1.2.

score_model_2 <- lm(score ~ age + gender, data = evals_ch7)

get_regression_table(score_model_2)| term | estimate | std_error | statistic | p_value | lower_ci | upper_ci |

|---|---|---|---|---|---|---|

| intercept | 4.484 | 0.125 | 35.79 | 0.000 | 4.238 | 4.730 |

| age | -0.009 | 0.003 | -3.28 | 0.001 | -0.014 | -0.003 |

| gendermale | 0.191 | 0.052 | 3.63 | 0.000 | 0.087 | 0.294 |

The modeling equation for this scenario is:

where is an indicator function for sex == male. In other words, equals one if the current observation corresponds to a male professor, and 0 if the current observation corresponds to a female professor. This model can be visualized in Figure 7.5.

Figure 7.5: Instructor evaluation scores at UT Austin by gender: same slope

We see that:

- Females are treated as the baseline for comparison for no other reason than “female” is alphabetically earlier than “male.” The is the vertical “bump” that men get in their teaching evaluation scores. Or more precisely, it is the average difference in teaching score that men get relative to the baseline of women.

- Accordingly, the intercepts (which in this case make no sense since no instructor can have an age of 0) are :

- for women: = 4.484

- for men: = 4.484 + 0.191 = 4.675

- Both men and women have the same slope. In other words, in this model the associated effect of age is the same for men and women. So for every increase of one year in age, there is on average an associated change of = -0.009 (a decrease) in teaching score.

But wait, why is Figure 7.5 different than Figure 7.4! What is going on? What we have in the original plot is known as an interaction effect between age and gender. Focusing on fitting a model for each of men and women, we see that the resulting regression lines are different. Thus, gender appears to interact in different ways for men and women with the different values of age.

Two numerical explanatory variables

Let’s now attempt to identify factors that are associated with how much credit card debt an individual will have. The textbook An Introduction to Statistical Learning with Applications in R by Gareth James, Daniela Witten, Trevor Hastie, and Robert Tibshirani is an intermediate-level textbook on statistical and machine learning freely available here. It has an accompanying R package called ISLR with datasets that the authors use to demonstrate various machine learning methods. One dataset that is frequently used by the authors is the Credit dataset where predictions are made on the credit card balance held by credit card holders.

These predictions are based on information about them like income, credit limit, and education level. Note that this dataset is not based on actual individuals, it is a simulated dataset used for educational purposes.

Since no information was provided as to who these = 400 individuals are and how they came to be included in this dataset, it will be hard to make any scientific claims based on this data. Recall our discussion from the previous chapter that correlation does not necessarily imply causation. That being said, we’ll still use Credit to demonstrate multiple regression with:

- A numerical outcome variable , in this case credit card balance.

- Two explanatory variables:

- A first numerical explanatory variable . In this case, their credit limit.

- A second numerical explanatory variable . In this case, their income (in thousands of dollars).

In the forthcoming Learning Checks, we’ll consider a different scenario:

- The same numerical outcome variable : credit card balance.

- Two new explanatory variables:

- A first numerical explanatory variable : their credit rating.

- A second numerical explanatory variable : their age.

EDA

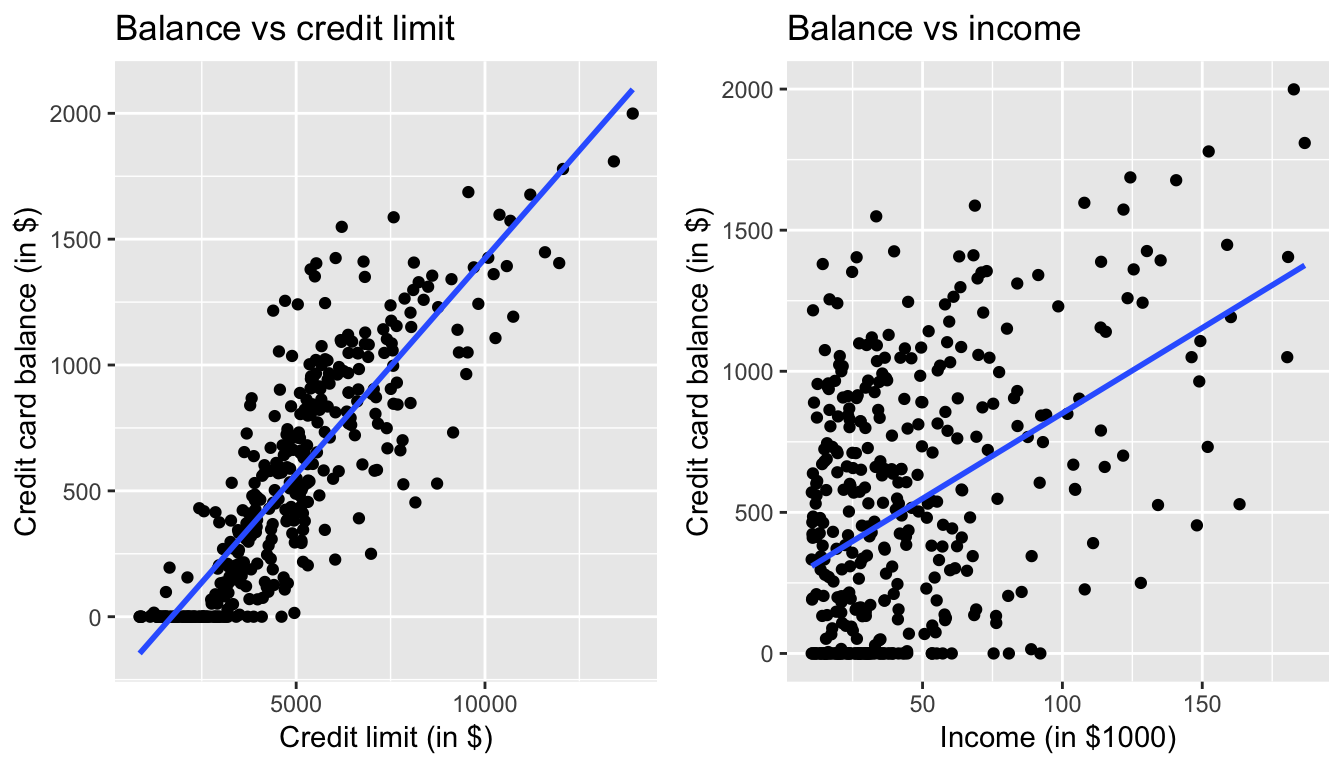

For example, the correlation coefficient of:

Balancewith itself is 1 as we would expect based on the definition of the correlation coefficient.BalancewithLimitis 0.862. This indicates a strong positive linear relationship, which makes sense as only individuals with large credit limits can accrue large credit card balances.BalancewithIncomeis 0.464. This is suggestive of another positive linear relationship, although not as strong as the relationship betweenBalanceandLimit.- As an added bonus, we can read off the correlation coefficient of the two explanatory variables,

LimitandIncomeof 0.792. In this case, we say there is a high degree of collinearity between these two explanatory variables.

Collinearity (or multicollinearity) is a phenomenon in which one explanatory variable in a multiple regression model can be linearly predicted from the others with a substantial degree of accuracy.

So in this case, if we knew someone’s credit card Limit and since Limit and Income are highly correlated, we could make a fairly accurate guess as to that person’s Income. Or put loosely, these two variables provided redundant information. For now let’s ignore any issues related to collinearity and press on.

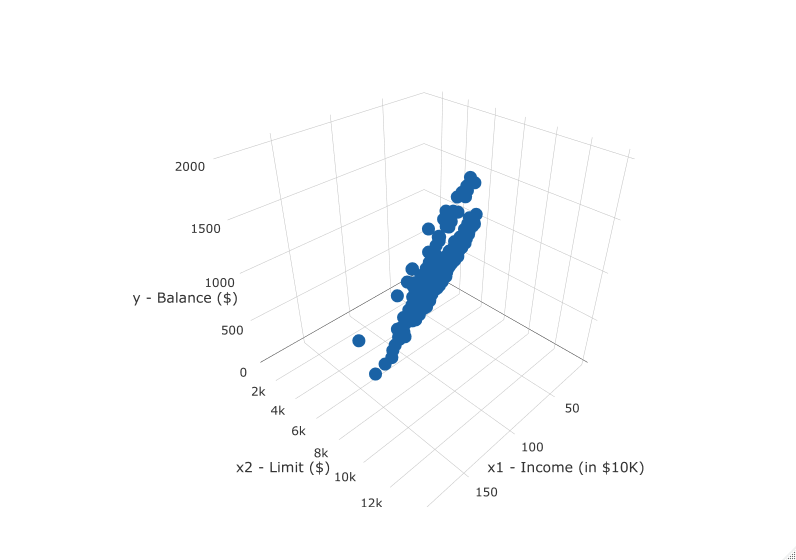

First, there is a positive relationship between credit limit and balance, since as credit limit increases so does the credit card balance; this is to be expected given the strongly positive correlation coefficient of 0.862. In the case of income, the positive relationship doesn’t appear as strong, given the weakly positive correlation coefficient of 0.464. However the two plots in Figure 7.1 only focus on the relationship of the outcome variable with each of the explanatory variables independently. To get a sense of the joint relationship of all three variables simultaneously through a visualization, let’s display the data in a 3-dimensional (3D) scatterplot, where

- The numerical outcome variable

Balanceis on the z-axis (vertical axis) - The two numerical explanatory variables form the “floor” axes. In this case

- The first numerical explanatory variable

Incomeis on of the floor axes. - The second numerical explanatory variable

Limitis on the other floor axis.

- The first numerical explanatory variable

Click on the following image to open an interactive 3D scatterplot in your browser:

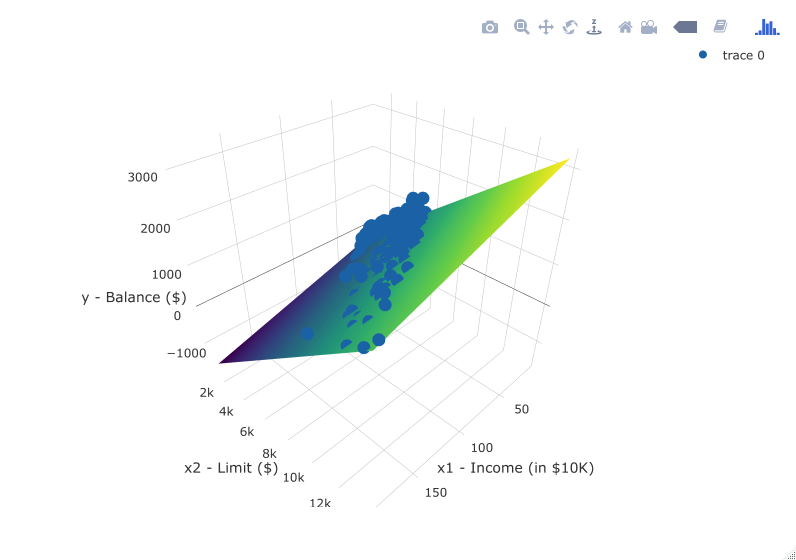

Previously in Figure 6.6, we plotted a “best-fitting” regression line through a set of points where the numerical outcome variable was teaching score and a single numerical explanatory variable was bty_avg. What is the analogous concept when we have two numerical predictor variables? Instead of a best-fitting line, we now have a best-fitting plane, which is a 3D generalization of lines which exist in 2D. Click on the following image to open an interactive plot of the regression plane in your browser. Move the image around, zoom in, and think about how this plane generalizes the concept of a linear regression line to three dimensions.

Observed/fitted values and residuals

As we did previously in Table 7.4, let’s unpack the output of the get_regression_points() function for our model for credit card balance for all 400 card holders in the dataset. Recall that each card holder corresponds to one of the 400 rows in the Credit data frame and also for one of the 400 3D points in the 3D scatterplots in Subsection 7.1.1.

regression_points <- get_regression_points(Balance_model) regression_points

| ID | Balance | Limit | Income | Balance_hat | residual |

|---|---|---|---|---|---|

| 1 | 333 | 3606 | 14.9 | 454 | -120.8 |

| 2 | 903 | 6645 | 106.0 | 559 | 344.3 |

| 3 | 580 | 7075 | 104.6 | 683 | -103.4 |

| 4 | 964 | 9504 | 148.9 | 986 | -21.7 |

| 5 | 331 | 4897 | 55.9 | 481 | -150.0 |

Recall the format of the output:

Balancecorresponds to (the observed value)Balance_hatcorresponds to (the fitted value)-

residualcorresponds to (the residual)

Simpson’s Paradox

Recall in Section 7.1, we saw the two following seemingly contradictory results when studying the relationship between credit card balance, credit limit, and income. On the one hand, the right hand plot of Figure 7.1 suggested that credit card balance and income were positively related:

Figure 7.8: Relationship between credit card balance and credit limit/income

On the other hand, the multiple regression in Table 7.3, suggested that when modeling credit card balance as a function of both credit limit and income at the same time, credit limit has a negative relationship with balance, as evidenced by the slope of -7.66. How can this be?

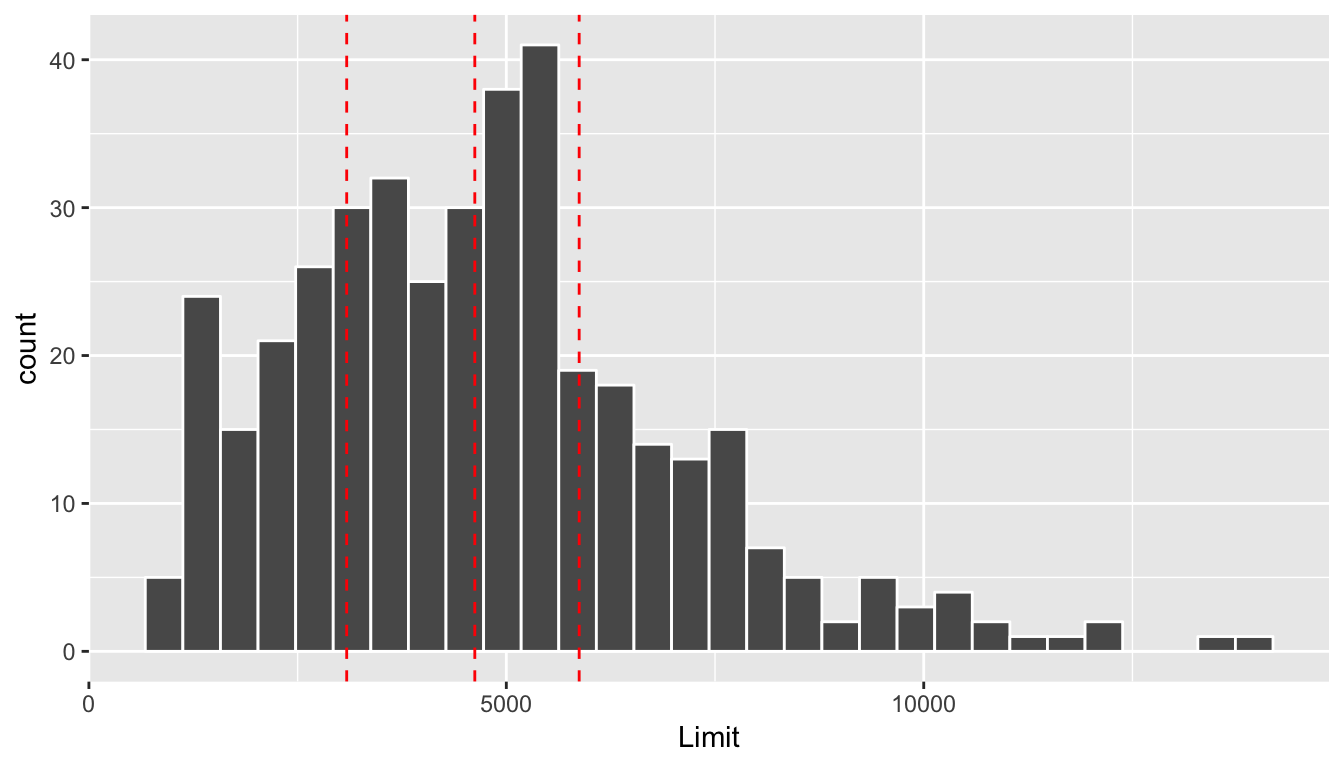

First, let’s dive a little deeper into the explanatory variable Limit. Figure 7.9 shows a histogram of all 400 values of Limit, along with vertical red lines that cut up the data into quartiles, meaning:

- 25% of credit limits were between $0 and $3088. Let’s call this the “low” credit limit bracket.

- 25% of credit limits were between $3088 and $4622. Let’s call this the “medium-low” credit limit bracket.

- 25% of credit limits were between $4622 and $5873. Let’s call this the “medium-high” credit limit bracket.

- 25% of credit limits were over $5873. Let’s call this the “high” credit limit bracket.

Figure 7.9: Histogram of credit limits and quartiles

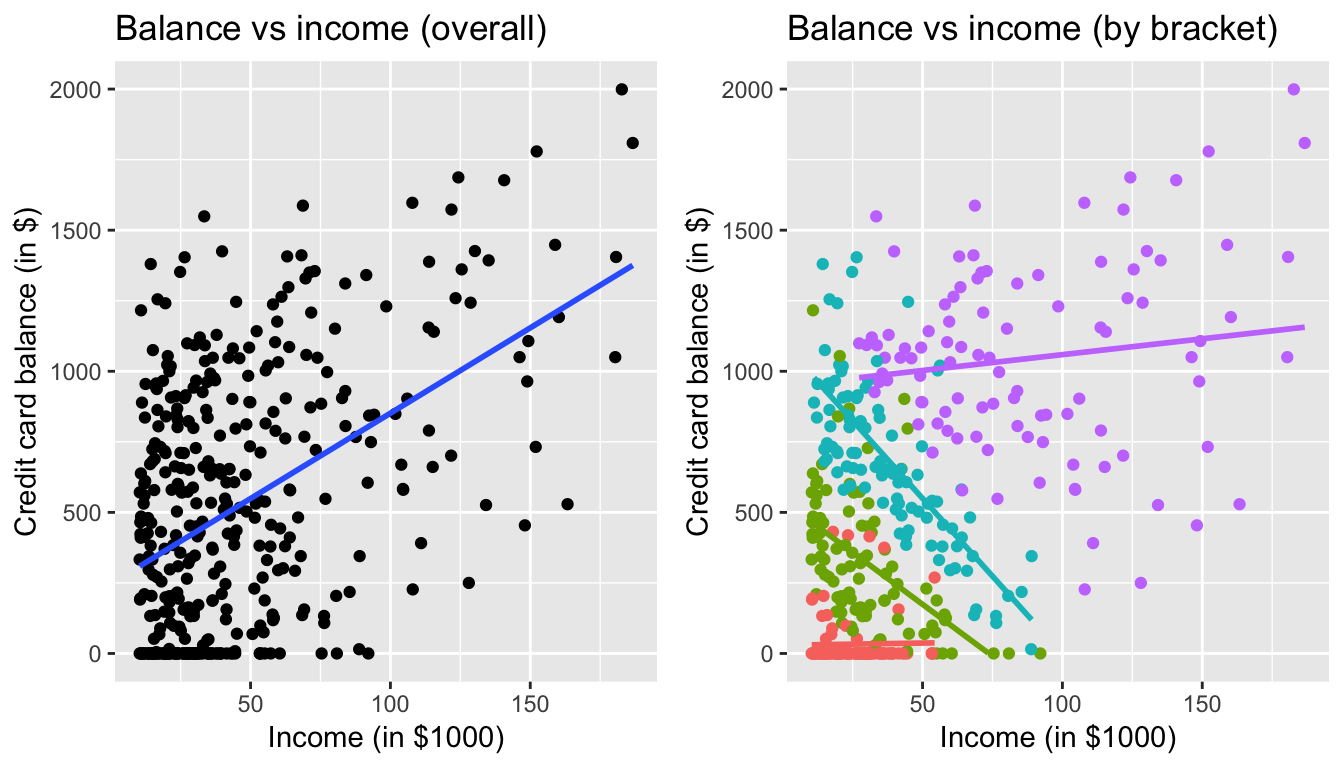

Let’s now display

- The scatterplot showing the relationship between credit card balance and limit (the right-hand plot of Figure 7.1).

- The scatterplot showing the relationship between credit card balance and limit now with a color aesthetic added corresponding to the credit limit bracket.

Figure 7.10: Relationship between credit card balance and income for different credit limit brackets

In the right-hand plot, the

- Red points (bottom-left) correspond to the low credit limit bracket.

- Green points correspond to the medium-low credit limit bracket.

- Blue points correspond to the medium-high credit limit bracket.

- Purple points (top-right) correspond to the high credit limit bracket.

The left-hand plot focuses on the relationship between balance and income in aggregate, but the right-hand plot focuses on the relationship between balance and income broken down by credit limit bracket. Whereas in aggregate there is an overall positive relationship, when broken down we now see that for the low (red points), medium-low (green points), and medium-high (blue points) income bracket groups, the strong positive relationship between credit card balance and income disappears! Only for the high bracket does the relationship stay somewhat positive. In this example, credit limit is a confounding variable for credit card balance and income.