Chapter 5.1 Descriptive Statistics

Descriptive statistics are used to organize or summarize a particular set of measurements. In other words, a descriptive statistic will describe that set of measurements. For example, in our study above, the mean described the absenteeism rates of five nurses on each unit. The U.S. census represents another example of descriptive statistics. In this case, the information that is gathered concerning gender, race, income, etc. is compiled to describe the population of

the United States at a given point in time. What these examples have in common is that they organize, summarize, and describe a set of measurements.

Just “looking at the data” isn’t a terribly effective way of understanding data. In order to get some idea about what’s going on, we need to calculate some descriptive statistics (this chapter) and draw some nice pictures (Chapter 6. Since the descriptive statistics are the easier of the two topics, I’ll start with those, but nevertheless I’ll show you a histogram of the afl.margins data, since it should help you get a sense of what the data we’re trying to describe actually look like. But for what it’s worth, this histogram – which is shown in Figure 5.1 – was generated using the hist() function. We’ll talk a lot more about how to draw histograms in Section 6.3.

Summary

Calculating some basic descriptive statistics is one of the very first things you do when analysing real data, and descriptive statistics are much simpler to understand than inferential statistics, so like every other statistics textbook I’ve started with descriptives. In this chapter, we talked about the following topics:

-

Measures of central tendency. Broadly speaking, central tendency measures tell you where the data are. There’s three measures that are typically reported in the literature: the mean, median and mode. (Section 5.1)

-

Measures of variability. In contrast, measures of variability tell you about how “spread out” the data are. The key measures are: range, standard deviation, interquartile range (Section@refsec:var)

-

Getting summaries of variables in R. Since this book focuses on doing data analysis in R, we spent a bit of time talking about how descriptive statistics are computed in R. (Section and 5.5)

-

Standard scores. The -score is a slightly unusual beast. It’s not quite a descriptive statistic, and not quite an inference. We talked about it in Section 5.6. Make sure you understand that section: it’ll come up again later.

-

Correlations. Want to know how strong the relationship is between two variables? Calculate a correlation. (Section 5.7)

-

Missing data. Dealing with missing data is one of those frustrating things that data analysts really wish the didn’t have to think about. In real life it can be hard to do well. For the purpose of this book, we only touched on the basics in Section 5.8

In the next section we’ll move on to a discussion of how to draw pictures! Everyone loves a pretty picture, right? But before we do, I want to end on an important point. A traditional first course in statistics spends only a small proportion of the class on descriptive statistics, maybe one or two lectures at most. The vast majority of the lecturer’s time is spent on inferential statistics, because that’s where all the hard stuff is. That makes sense, but it hides the practical everyday importance of choosing good descriptives. With that in mind…

Good descriptive statistics are descriptive!

The death of one man is a tragedy. The death of millions is a statistic.

– Josef Stalin, Potsdam 1945

950,000 – 1,200,000

– Estimate of Soviet repression deaths, 1937-1938 (Ellman 2002)

Stalin’s infamous quote about the statistical character death of millions is worth giving some thought. The clear intent of his statement is that the death of an individual touches us personally and its force cannot be denied, but that the deaths of a multitude are incomprehensible, and as a consequence mere statistics, more easily ignored. I’d argue that Stalin was half right. A statistic is an abstraction, a description of events beyond our personal experience, and so hard to visualise. Few if any of us can imagine what the deaths of millions is “really” like, but we can imagine one death, and this gives the lone death its feeling of immediate tragedy, a feeling that is missing from Ellman’s cold statistical description.

Yet it is not so simple: without numbers, without counts, without a description of what happened, we have no chance of understanding what really happened, no opportunity event to try to summon the missing feeling. And in truth, as I write this, sitting in comfort on a Saturday morning, half a world and a whole lifetime away from the Gulags, when I put the Ellman estimate next to the Stalin quote a dull dread settles in my stomach and a chill settles over me. The Stalinist repression is something truly beyond my experience, but with a combination of statistical data and those recorded personal histories that have come down to us, it is not entirely beyond my comprehension. Because what Ellman’s numbers tell us is this: over a two year period, Stalinist repression wiped out the equivalent of every man, woman and child currently alive in the city where I live. Each one of those deaths had it’s own story, was it’s own tragedy, and only some of those are known to us now. Even so, with a few carefully chosen statistics, the scale of the atrocity starts to come into focus.

Thus it is no small thing to say that the first task of the statistician and the scientist is to summarise the data, to find some collection of numbers that can convey to an audience a sense of what has happened. This is the job of descriptive statistics, but it’s not a job that can be told solely using the numbers. You are a data analyst, not a statistical software package. Part of your job is to take these statistics and turn them into a description. When you analyse data, it is not sufficient to list off a collection of numbers. Always remember that what you’re really trying to do is communicate with a human audience. The numbers are important, but they need to be put together into a meaningful story that your audience can interpret. That means you need to think about framing. You need to think about context. And you need to think about the individual events that your statistics are summarising.

References

Ellman, Michael. 2002. “Soviet Repression Statistics: Some Comments.” Europe-Asia Studies 54 (7). Taylor & Francis: 1151–72.

-

Note for non-Australians: the AFL is an Australian rules football competition. You don’t need to know anything about Australian rules in order to follow this section.↩

-

The choice to use to denote summation isn’t arbitrary: it’s the Greek upper case letter sigma, which is the analogue of the letter S in that alphabet. Similarly, there’s an equivalent symbol used to denote the multiplication of lots of numbers: because multiplications are also called “products”, we use the symbol for this; the Greek upper case pi, which is the analogue of the letter P.↩

-

Note that, just as we saw with the combine function

c()and the remove functionrm(), thesum()function has unnamed arguments. I’ll talk about unnamed arguments later in Section ??, but for now let’s just ignore this detail.↩ -

www.abc.net.au/news/stories/2010/09/24/3021480.htm↩

-

Or at least, the basic statistical theory – these days there is a whole subfield of statistics called robust statistics that tries to grapple with the messiness of real data and develop theory that can cope with it.↩

-

As we saw earlier, it does have a function called

mode(), but it does something completely different.↩ -

This is called a “0-1 loss function”, meaning that you either win (1) or you lose (0), with no middle ground.↩

-

Well, I will very briefly mention the one that I think is coolest, for a very particular definition of “cool”, that is. Variances are additive. Here’s what that means: suppose I have two variables and , whose variances are $↩

-

With the possible exception of the third question.↩

-

Strictly, the assumption is that the data are normally distributed, which is an important concept that we’ll discuss more in Chapter ??, and will turn up over and over again later in the book.↩

-

The assumption again being that the data are normally-distributed!↩

-

The “” part is something that statisticians tack on to ensure that the normal curve has kurtosis zero. It looks a bit stupid, just sticking a “-3” at the end of the formula, but there are good mathematical reasons for doing this.↩

-

I haven’t discussed how to compute -scores, explicitly, but you can probably guess. For a variable

X, the simplest way is to use a command like(X - mean(X)) / sd(X). There’s also a fancier function calledscale()that you can use, but it relies on somewhat more complicated R concepts that I haven’t explained yet.↩ -

Technically, because I’m calculating means and standard deviations from a sample of data, but want to talk about my grumpiness relative to a population, what I’m actually doing is estimating a score. However, since we haven’t talked about estimation yet (see Chapter ??) I think it’s best to ignore this subtlety, especially as it makes very little difference to our calculations.↩

-

Though some caution is usually warranted. It’s not always the case that one standard deviation on variable A corresponds to the same “kind” of thing as one standard deviation on variable B. Use common sense when trying to determine whether or not the scores of two variables can be meaningfully compared.↩

-

Actually, even that table is more than I’d bother with. In practice most people pick one measure of central tendency, and one measure of variability only.↩

-

Just like we saw with the variance and the standard deviation, in practice we divide by rather than .↩

-

This is an oversimplification, but it’ll do for our purposes.↩

-

If you are reading this after having already completed Chapter ?? you might be wondering about hypothesis tests for correlations. R has a function called

cor.test()that runs a hypothesis test for a single correlation, and thepsychpackage contains a version calledcorr.test()that can run tests for every correlation in a correlation matrix; hypothesis tests for correlations are discussed in more detail in Section ??.↩ -

An alternative usage of

cor()is to correlate one set of variables with another subset of variables. IfXandYare both data frames with the same number of rows, thencor(x = X, y = Y)will produce a correlation matrix that correlates all variables inXwith all variables inY.↩ -

It’s worth noting that, even though we have missing data for each of these variables, the output doesn’t contain any

NAvalues. This is because, whiledescribe()also has anna.rmargument, the default value for this function isna.rm = TRUE.↩ -

The technical term here is “missing completely at random” (often written MCAR for short). Makes sense, I suppose, but it does sound ungrammatical to me.↩

Descriptive statistics separately for each group

It is very commonly the case that you find yourself needing to look at descriptive statistics, broken down by some grouping variable. This is pretty easy to do in R, and there are three functions in particular that are worth knowing about: by(),describeBy() and aggregate(). Let’s start with the describeBy() function, which is part of the psych package. The describeBy() function is very similar to the describe() function, except that it has an additional argument called group which specifies a grouping variable.

As you can see, the output is essentially identical to the output that the describe() function produce, except that the output now gives you means, standard deviations etc separately for the CBT group and the no.therapy group. Notice that, as before, the output displays asterisks for factor variables, in order to draw your attention to the fact that the descriptive statistics that it has calculated won’t be very meaningful for those variables. Nevertheless, this command has given us some really useful descriptive statistics mood.gain variable, broken down as a function of therapy.

A somewhat more general solution is offered by the by() function. There are three arguments that you need to specify when using this function: the data argument specifies the data set, the INDICES argument specifies the grouping variable, and the FUN argument specifies the name of a function that you want to apply separately to each group.

Again, this output is pretty easy to interpret. It’s the output of the summary() function, applied separately to CBT group and the no.therapy group. For the two factors (drug and therapy) it prints out a frequency table, whereas for the numeric variable (mood.gain) it prints out the range, interquartile range, mean and median.

What if you have multiple grouping variables? Suppose, for example, you would like to look at the average mood gain separately for all possible combinations of drug and therapy. It is actually possible to do this using the by() and describeBy() functions, but I usually find it more convenient to use the aggregate() function in this situation. There are again three arguments that you need to specify.

The formula argument is used to indicate which variable you want to analyse, and which variables are used to specify the groups. For instance, if you want to look at mood.gain separately for each possible combination of drug and therapy, the formula you want is mood.gain ~ drug + therapy. The data argument is used to specify the data frame containing all the data, and the FUN argument is used to indicate what function you want to calculate for each group (e.g., the mean).

Getting an overall summary of a variable

Up to this point in the chapter I’ve explained several different summary statistics that are commonly used when analysing data, along with specific functions that you can use in R to calculate each one. However, it’s kind of annoying to have to separately calculate means, medians, standard deviations, skews etc. Wouldn’t it be nice if R had some helpful functions that would do all these tedious calculations at once? Something like summary() or describe(), perhaps? Why yes, yes it would. So much so that both of these functions exist. The summary() function is in the base package, so it comes with every installation of R. The describe() function is part of the psych package, which we loaded earlier in the chapter.

“Summarising” a variable

The summary() function is an easy thing to use, but a tricky thing to understand in full, since it’s a generic function (see Section 4.11. The basic idea behind the summary() function is that it prints out some useful information about whatever object (i.e., variable, as far as we’re concerned) you specify as the object argument.

As a consequence, the behaviour of the summary() function differs quite dramatically depending on the class of the object that you give it.

Let’s start by giving it a numeric object:

summary( object = afl.margins ) ## Min. 1st Qu. Median Mean 3rd Qu. Max.

## 0.00 12.75 30.50 35.30 50.50 116.00For numeric variables, we get a whole bunch of useful descriptive statistics. It gives us the minimum and maximum values (i.e., the range), the first and third quartiles (25th and 75th percentiles; i.e., the IQR), the mean and the median. In other words, it gives us a pretty good collection of descriptive statistics related to the central tendency and the spread of the data.

Okay, what about if we feed it a logical vector instead? Let’s say I want to know something about how many “blowouts” there were in the 2010 AFL season. I operationalise the concept of a blowout (see Chapter 2) as a game in which the winning margin exceeds 50 points.

For factors, we get a frequency table, just like we got when we used the table() function. Interestingly, however, if we convert this to a character vector using the as.character() function, we don’t get the same results:

f2 <- as.character( afl.finalists )

summary( object = f2 )

This is one of those situations I was referring to in Section 4.7, in which it is helpful to declare your nominal scale variable as a factor rather than a character vector.

Because I’ve defined afl.finalists as a factor, R knows that it should treat it as a nominal scale variable, and so it gives you a much more detailed (and helpful) summary than it would have if I’d left it as a character vector.

“Summarising” a data frame

Okay what about data frames? When you pass a data frame to the summary() function, it produces a slightly condensed summary of each variable inside the data frame. To give you a sense of how this can be useful, let’s try this for a new data set, one that you’ve never seen before. The data is stored in the clinicaltrial.Rdata file, and we’ll use it a lot in Chapter ?? (you can find a complete description of the data at the start of that chapter). Let’s load it, and see what we’ve got.

“Describing” a data frame

The describe() function (in the psych package) is a little different, and it’s really only intended to be useful when your data are interval or ratio scale.

Unlike the summary() function, it calculates the same descriptive statistics for any type of variable you give it. By default, these are:

-

var. This is just an index: 1 for the first variable, 2 for the second variable, and so on. -

n. This is the sample size: more precisely, it’s the number of non-missing values. -

mean. This is the sample mean (Section 5.1.1). -

sd. This is the (bias corrected) standard deviation (Section 5.2.5). -

median. The median (Section 5.1.3). -

trimmed. This is trimmed mean. By default it’s the 10% trimmed mean (Section 5.1.6). -

mad. The median absolute deviation (Section 5.2.6). -

min. The minimum value. -

max. The maximum value. -

range. The range spanned by the data (Section 5.2.1). -

skew. The skewness (Section ??). -

kurtosis. The kurtosis (Section 5.3). -

se. The standard error of the mean (Chapter ??).

Notice that these descriptive statistics generally only make sense for data that are interval or ratio scale (usually encoded as numeric vectors).

For nominal or ordinal variables (usually encoded as factors), most of these descriptive statistics are not all that useful. What the describe() function does is convert factors and logical variables to numeric vectors in order to do the calculations. These variables are marked with * and most of the time, the descriptive statistics for those variables won’t make much sense. If you try to feed it a data frame that includes a character vector as a variable, it produces an error.

With those caveats in mind, let’s use the describe() function to have a look at the clin.trial data frame. Here’s what we get:

describe( x = clin.trial )## vars n mean sd median trimmed mad min max range skew

## drug* 1 18 2.00 0.84 2.00 2.00 1.48 1.0 3.0 2.0 0.00

## therapy* 2 18 1.50 0.51 1.50 1.50 0.74 1.0 2.0 1.0 0.00

## mood.gain 3 18 0.88 0.53 0.85 0.88 0.67 0.1 1.8 1.7 0.13

## kurtosis se

## drug* -1.66 0.20

## therapy* -2.11 0.12

## mood.gain -1.44 0.13As you can see, the output for the asterisked variables is pretty meaningless, and should be ignored. However, for the mood.gain variable, there’s a lot of useful information.

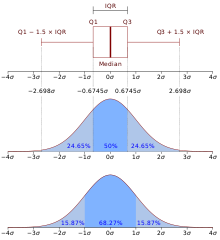

Quartile

A quartile is a type of quantile. The first quartile (Q1) is defined as the middle number between the smallest number and the median of the data set. The second quartile (Q2) is the median of the data. The third quartile (Q3) is the middle value between the median and the highest value of the data set.

In applications of statistics such as epidemiology, sociology and finance, the quartiles of a ranked set of data values are the four subsets whose boundaries are the three quartile points. Thus an individual item might be described as being "on the upper quartile".

Definitions

| Symbol | Names | Definition |

|---|---|---|

| Q1 |

|

splits off the lowest 25% of data from the highest 75% |

| Q2 |

|

cuts data set in half |

| Q3 |

|

splits off the highest 25% of data from the lowest 75% |

Quantile

In statistics and probability quantiles are cut points dividing the range of a probability distribution into continuous intervals with equal probabilities, or dividing the observations in a sample in the same way.

There is one less quantile than the number of groups created. Thus quartiles are the three cut points that will divide a dataset into four equal-sized groups. Common quantiles have special names: for instance quartile, decile (creating 10 groups: see below for more). The groups created are termed halves, thirds, quarters, etc., though sometimes the terms for the quantile are used for the groups created, rather than for the cut points.

q-quantiles are values that partition a finite set of values into q subsets of (nearly) equal sizes.

There are q − 1 of the q-quantiles, one for each integer k satisfying 0 < k < q.

In some cases the value of a quantile may not be uniquely determined, as can be the case for the median (2-quantile) of a uniform probability distribution on a set of even size. Quantiles can also be applied to continuous distributions, providing a way to generalize rank statistics to continuous variables. When the cumulative distribution function of a random variable is known, the q-quantiles are the application of the quantile function (the inverse function of the cumulative distribution function) to the values {1/q, 2/q, …, (q − 1)/q}.

Specialized quantiles

Some q-quantiles have special names:[citation needed]

- The only 2-quantile is called the median

- The 3-quantiles are called tertiles or terciles → T

- The 4-quantiles are called quartiles → Q; the difference between upper and lower quartiles is also called the interquartile range, midspread or middle fifty → IQR = Q3 − Q1

- The 5-quantiles are called quintiles → QU

- The 6-quantiles are called sextiles → S

- The 7-quantiles are called septiles

- The 8-quantiles are called octiles → O

- The 10-quantiles are called deciles → D

- The 12-quantiles are called duo-deciles → Dd

- The 16-quantiles are called hexadeciles → H

- The 20-quantiles are called ventiles, vigintiles, or demi-deciles → V

- The 33-quantiles are called trigintatreciles → TT

- The 100-quantiles are called percentiles → P

- The 1000-quantiles are called permilles → Pr

Skew and kurtosis{#skew}

There are two more descriptive statistics that you will sometimes see reported in the psychological literature, known as skew and kurtosis.

In practice, neither one is used anywhere near as frequently as the measures of central tendency and variability that we’ve been talking about. Skew is pretty important, so you do see it mentioned a fair bit; but I’ve actually never seen kurtosis reported in a scientific article to date.

## [1] -0.9289509## [1] -0.00643133

Figure 5.4: An illustration of skewness. On the left we have a negatively skewed data set (skewness ), in the middle we have a data set with no skew (technically, skewness ), and on the right we have a positively skewed data set (skewness ).

## [1] 0.9308677Since it’s the more interesting of the two, let’s start by talking about the skewness. Skewness is basically a measure of asymmetry, and the easiest way to explain it is by drawing some pictures. As Figure 5.4 illustrates, if the data tend to have a lot of extreme small values (i.e., the lower tail is “longer” than the upper tail) and not so many extremely large values (left panel), then we say that the data are negatively skewed. On the other hand, if there are more extremely large values than extremely small ones (right panel) we say that the data are positively skewed. That’s the qualitative idea behind skewness. The actual formula for the skewness of a data set is as follows

where is the number of observations, is the sample mean, and is the standard deviation (the “divide by ” version, that is). Perhaps more helpfully, it might be useful to point out that the psych package contains a skew() function that you can use to calculate skewness.

The final measure that is sometimes referred to, though very rarely in practice, is the kurtosis of a data set. Put simply, kurtosis is a measure of the “pointiness” of a data set, as illustrated in Figure 5.5.

## [1] -0.9697637## [1] 0.007267299

Figure 5.5: An illustration of kurtosis. On the left, we have a “platykurtic” data set (kurtosis = ), meaning that the data set is “too flat”. In the middle we have a “mesokurtic” data set (kurtosis is almost exactly 0), which means that the pointiness of the data is just about right. Finally, on the right, we have a “leptokurtic” data set (kurtosis ) indicating that the data set is “too pointy”. Note that kurtosis is measured with respect to a normal curve (black line)

## [1] 2.023242By convention, we say that the “normal curve” (black lines) has zero kurtosis, so the pointiness of a data set is assessed relative to this curve. In this Figure, the data on the left are not pointy enough, so the kurtosis is negative and we call the data platykurtic. The data on the right are too pointy, so the kurtosis is positive and we say that the data is leptokurtic. But the data in the middle are just pointy enough, so we say that it is mesokurtic and has kurtosis zero. This is summarised in the table below:

| informal term | technical name | kurtosis value |

|---|---|---|

| too flat | platykurtic | negative |

| just pointy enough | mesokurtic | zero |

| too pointy | leptokurtic | positive |

The equation for kurtosis is pretty similar in spirit to the formulas we’ve seen already for the variance and the skewness; except that where the variance involved squared deviations and the skewness involved cubed deviations, the kurtosis involves raising the deviations to the fourth power:75

I know, it’s not terribly interesting to me either. More to the point, the psych package has a function called kurtosi() that you can use to calculate the kurtosis of your data. For instance, if we were to do this for the AFL margins,

Standardization (z-score)

A common task in statistics is to standardize variables – also known as calculating z-scores. The purpose of standardizing a vector is to put it on a common scale which allows you to compare it to other (standardized) variables. To standardize a vector, you simply subtract the vector by its mean, and then divide the result by the vector’s standard deviation.

-

A positive z-score says the data point is above average.

-

A negative z-score says the data point is below average.

-

A z-score close to 0 says the data point is close to average.

-

A data point can be considered unusual if its z-score is above 3 or below minus, 3.

A simpler way around this is to describe my grumpiness by comparing me to other people. Shockingly, out of my friend’s sample of 1,000,000 people, only 159 people were as grumpy as me (that’s not at all unrealistic, frankly), suggesting that I’m in the top 0.016% of people for grumpiness. This makes much more sense than trying to interpret the raw data. This idea – that we should describe my grumpiness in terms of the overall distribution of the grumpiness of humans – is the qualitative idea that standardisation attempts to get at. One way to do this is to do exactly what I just did, and describe everything in terms of percentiles. However, the problem with doing this is that “it’s lonely at the top”. Suppose that my friend had only collected a sample of 1000 people (still a pretty big sample for the purposes of testing a new questionnaire, I’d like to add), and this time gotten a mean of 16 out of 50 with a standard deviation of 5, let’s say. The problem is that almost certainly, not a single person in that sample would be as grumpy as me.

However, all is not lost. A different approach is to convert my grumpiness score into a standard score, also referred to as a -score. The standard score is defined as the number of standard deviations above the mean that my grumpiness score lies. To phrase it in “pseudo-maths” the standard score is calculated like this:

In actual maths, the equation for the -score is

So, going back to the grumpiness data, we can now transform Dan’s raw grumpiness into a standardised grumpiness score.76 If the mean is 17 and the standard deviation is 5 then my standardised grumpiness score would be77

To interpret this value, recall the rough heuristic that I provided in Section 5.2.5, in which I noted that 99.7% of values are expected to lie within 3 standard deviations of the mean. So the fact that my grumpiness corresponds to a score of 3.6 indicates that I’m very grumpy indeed. Later on, in Section ??, I’ll introduce a function called pnorm() that allows us to be a bit more precise than this. Specifically, it allows us to calculate a theoretical percentile rank for my grumpiness, as follows:

pnorm( 3.6 )## [1] 0.9998409At this stage, this command doesn’t make too much sense, but don’t worry too much about it. It’s not important for now. But the output is fairly straightforward: it suggests that I’m grumpier than 99.98% of people. Sounds about right.

In addition to allowing you to interpret a raw score in relation to a larger population (and thereby allowing you to make sense of variables that lie on arbitrary scales), standard scores serve a second useful function. Standard scores can be compared to one another in situations where the raw scores can’t.

Suppose, for instance, my friend also had another questionnaire that measured extraversion using a 24 items questionnaire. The overall mean for this measure turns out to be 13 with standard deviation 4; and I scored a 2. As you can imagine, it doesn’t make a lot of sense to try to compare my raw score of 2 on the extraversion questionnaire to my raw score of 35 on the grumpiness questionnaire. The raw scores for the two variables are “about” fundamentally different things, so this would be like comparing apples to oranges.

What about the standard scores? Well, this is a little different. If we calculate the standard scores, we get

for grumpiness and